Dallas. Motorcade. Noon light. Phones already up.

The first shot lands before anyone understands what they are seeing. The second clip appears before the first official statement. By the time the motorcade stops, the event is already splintering across X, TikTok, YouTube, Telegram, Reddit. One angle shows the hit. Another claims the agents stepped aside. A slowed-down clip begins circulating with red circles and arrows. Someone names a suspect. Someone names the wrong suspect. Someone posts that the real story is the stand-down. Someone else says the shooter was about to expose everything.

No grainy Zapruder film. No long vacuum before the public sees the evidence. This time it is 4K, multi-angle, real-time, algorithmically accelerated. The first millions do not wait for confirmation. They watch, react, repost, speculate, accuse. Synthetic audio drops. A witness account appears that never existed. A hashtag hardens. A false frame picks up domestic voices. Within minutes, the question is no longer just what happened. The question is what kind of story people now believe they are living inside.

That is the real shock of a modern symbolic assassination.

The death is the trigger.

The narrative war is the event.

You are watching it happen in real time — on your phone, on the TV at the bar, on every screen in every airport. And so is everyone else. Two billion people receiving the same thirty seconds of footage, processing it through every prior belief, every grievance, every existing distrust they carry about the country and its institutions.

This paper is not about whether a president could be killed on camera in 2026. It is about what happens to a country when belief forms faster than verification, when adversaries arrive with pre-built narratives, when domestic influencers scale those narratives before institutions can establish basic facts, and when the damage does not stop at social media but spills into markets, logistics, public order, and trust itself.

In that environment, the first battle is not over evidence. It is over narrative structure. Lose that, and everything that follows — from suspect identification to national response to economic stability — unfolds inside a contaminated frame.

This paper uses a 2026 JFK-style assassination scenario as a pressure test. Not a prediction.

The mechanisms described here are not untested. Brian Thompson, the CEO of UnitedHealthcare, was fatally shot outside a Manhattan hotel in December 2024. Within hours, a cross-spectrum narrative had formed — left and right audiences arriving at sympathy for the attacker from entirely different directions, through entirely different grievance structures. The martyr pivot ran faster than the law enforcement response. The official story — a disaffected individual with a documented grudge — competed from the start with a dozen more emotionally satisfying explanations. Thompson is the clearest recent proof of concept: a high-visibility domestic assassination in which the narrative war began before the body was cold, and in which class grievance, not foreign adversary seeding, drove the first wave. The targeting curve has widened since. The pattern has not changed.

The goal is to make abstract adversary playbooks concrete and actionable for practitioners in OSINT, counter-narrative operations, crisis communications, and executive protection — and to make the underlying dynamics visible to anyone who watches the news and wonders how so many people end up believing such different versions of the same event.

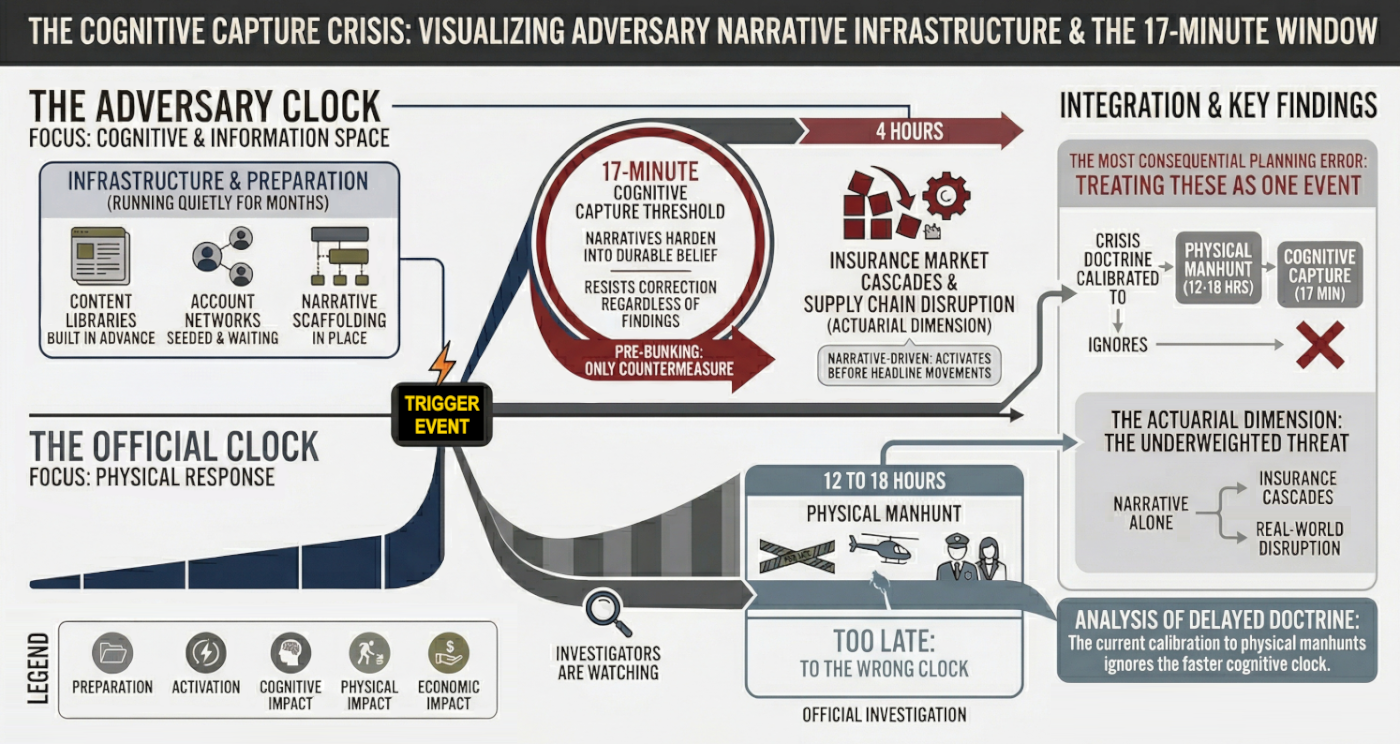

The event starts two clocks simultaneously. Only one of them matters for what comes next.

The first is the official clock. It tracks the physical crisis. Shots fired. Scene secured. Suspect identified. Manhunt launched. Evidence collected. Briefings prepared. This is the clock institutions understand. It runs for hours, sometimes days. It produces forensic conclusions. It names a perpetrator.

The second is the adversary clock. It tracks belief formation. First clip. First caption. First accusation. First false witness. First emotional frame that feels clear enough to repeat. This clock moves in minutes. It does not wait for forensic certainty. It does not need complete facts. It only needs enough ambiguity to turn shock into a story people can use.

Those clocks do not run at the same speed, and they do not decide the same outcome. The official clock may eventually determine who pulled the trigger. The adversary clock determines what millions of people think the event means — before the investigation has even begun.

That is the central problem this paper explores. The manhunt may last 12 to 18 hours. Cognitive capture begins far earlier. Treating those timelines as one is the most consequential planning error in modern crisis response.

"17 minutes" is an analytical shorthand for the cognitive capture threshold — the point at which narrative framing may harden into belief that resists correction regardless of what official investigations subsequently establish. The physical manhunt runs for hours or days. The narrative war is effectively set in its initial shape within the first quarter of an hour. These are not the same window and must not be treated as one.

The first 17 minutes are not important because they produce certainty. They matter because they produce structure. In a high-salience crisis, audiences do not wait for full verification. They reach for the first explanation that feels legible, emotionally usable, and socially repeatable. That initial frame is often established before institutions can verify basic facts — and once it is adopted by high-reach accounts, later correction loses leverage.

This is why the "17-minute threshold" should be treated as a planning threshold, not a literal constant. Its value is operational. If your response posture begins at 30 minutes, you are already operating inside an occupied information environment.

The Three-Stage Sequence

The threshold is best understood as a short sequence rather than a stopwatch.

| Phase | Duration | Primary Mechanism | Effective Countermeasure |

|---|---|---|---|

| Imprint | 0–17 min | Affect-driven processing; first-frame adoption before verification is possible | Pre-bunking; pre-approved holding statements deployable in under 5 minutes |

| Adoption | 17 min–4 hr | Reinforcement via newsbrokers; socialized belief spreading through high-reach amplifiers | Platform coordination; high-reach correction through pre-established trust and safety channels |

| Resistance | 4 hr–Day 3 | Narrative encampment; cognitive dissonance filtering out corrective information | Delegated authority communications; long-tail counter-narrative work |

"In fast-moving crisis environments, the first trusted frame often matters more than the first verified fact. By the time the verification arrives, the audience has already decided what kind of story it thinks it is hearing."Bob Johnson — Former CIA Analyst

Why Audiences Adopt Contaminated Narratives

People do not adopt contaminated narratives only because they are false. They adopt them because those narratives outperform verified information on three dimensions that matter in a crisis: veracity, emotional appeal, and relevance. Research on crisis information consumption points to a model known as VER.

This explains why falsehood can dominate the environment even when verified facts exist. False claims are often more novel, more emotionally charged, and more identity-confirming than the truth. They travel faster, resolve more slowly, and remain socially useful long after being debunked. The result is not just misinformation — it is narrative contamination, where false or unverified frames become the public's working reality before institutions can establish an authoritative account.

You are not immune to this. Neither is anyone you know. The VER model describes a normal human stress response, not a character flaw. Under shock, every brain trades verification speed for emotional clarity. The practical implication is simple: the information that reaches you in the first hour of a major event is statistically more likely to be incomplete or manipulated than anything you see afterward. Slow down before you share.

The Decline of Legacy Corroboration and the Rise of the Newsbroker

The modern crisis information environment is no longer organized around institutional confirmation. It is organized around speed, reach, and narrative brokerage.

In earlier media systems, legacy outlets played a larger corroboration role. They did not eliminate rumor, but they imposed delay, editorial friction, and a stronger norm of confirmation before amplification. That function has weakened. In the current environment, a small class of high-engagement influencer accounts, pseudonymous aggregators, and partisan interpreters increasingly acts as the first layer of narrative authority. These actors — often called newsbrokers — dominate crisis discourse by rapidly packaging speculation, clips, screenshots, and interpretive claims into shareable frames before traditional institutions can respond.

This is a structural shift, not a platform quirk. During fast-moving crises, the question is no longer simply whether official sources are trusted. It is whether they can even enter the conversation early enough to matter. Newsbrokers thrive inside that gap. They do not need to prove a theory. They need to name the moment first, give it emotional direction, and supply a frame that others can repeat. Once that happens, institutional statements are demoted from primary sensemaking tools to one input among many.

Legacy corroboration declines not only because trust has eroded, but because timing has collapsed. By the time a cautious institution confirms what happened, the public may already have accepted a brokered explanation that is more emotionally satisfying, more identity-aligned, and more socially portable. In that environment, the first narrative broker often matters more than the first verified source.

Adversary content may seed the initial frame, but domestic newsbrokers give it legitimacy, scale, and cultural fluency. Once they do, the foreign fingerprints matter less. The narrative has already been naturalized inside the domestic information ecosystem. Absence of detectable adversary origin is not evidence that the threat has passed. It is evidence the operation succeeded.

The 17-minute problem describes the compression of belief formation. The VER model explains why contaminated narratives are psychologically attractive under stress. The rise of the newsbroker explains who operationalizes that advantage in real time. The result is a crisis environment in which truth is not merely contested. It is structurally outpaced.

Observable Markers of Cognitive Capture

Watch for these signals — they indicate the cognitive battle has already begun:

- A stable accusation frame emerges before official verification

- The same core narrative appears across multiple platforms with audience-specific captioning

- High-reach domestic accounts begin repeating the frame without adversary attribution

- Misidentification converges around a named individual or small suspect pool

- Corrective content spreads more slowly than the original framing

- Users begin treating fabricated or unverified artifacts as already established fact

"I would not treat 17 minutes as a literal constant. I would treat it as a planning threshold. If your response posture starts at 30 minutes, you are already operating inside an occupied information environment."Calvin Klone — Former DIA Analyst · National Security and Narrative Intelligence

They flood the zone with "evidence" that it's an inside job. Deepfakes of Secret Service agents turning away, leaked memos from "whistleblowers" (all fabricated, all traceable to state actors). Their angle: "America's elite are eating their own." Goal: Make the U.S. look weak, divided, ungovernable.

They push a cross-spectrum bridge narrative designed to activate both ends of the political spectrum simultaneously. The same payload, different captions: one version routes through anti-imperialist distrust of intelligence agencies, the other through conspiratorial distrust of globalist elites. Both groups receive identical raw clips. The framing does the targeting.

They also leak "classified" audio: a politically salient synthetic clip framed as a leaked recording from a prior administration, joking about "taking out threats." Fake, but emotionally true enough. People don't fact-check when they're mad. Research on affect-driven processing consistently shows that high emotional arousal degrades verification behavior. The content doesn't need to be convincing. It needs to arrive first.

The Synthetic Witness Layer: The Threat You're Not Watching For

Beyond deepfakes and fabricated documents, China deploys a third content category that is harder to detect and faster to believe: AI-generated bystander accounts providing cross-platform eyewitness testimony. These are fully constructed personas with profile histories, location metadata, and posting patterns.

Deepfakes require the audience to evaluate media. Synthetic witnesses require them to evaluate people.

In a high-distrust environment where "anyone could have filmed it," fabricated eyewitness accounts from seemingly ordinary bystanders carry more persuasive weight than polished video content, which can be dismissed as "obviously staged." No current platform-level detection solution operates at scale. This is the capability that analysts are most underprepared for.

Hour 0–6 · Flood the Zone

State-linked accounts on Weibo and Douyin drop the first wave: ultra-realistic deepfakes. Secret Service agents visibly stepping aside in slowed-down drone footage (AI-generated, 60fps, perfect lip-sync). A "whistleblower memo" from a supposed NSA contractor appears on Telegram and is reposted to Reddit within minutes, watermarked with authentic-looking classification stamps.

Left-leaning audiences get the "military-industrial complex finally cashed in its chips" version. Right-leaning audiences get the "deep-state coup" version. Both groups receive the exact same raw clips. The only difference is the caption.

Hour 6–48 · Cross-Spectrum Goes Viral

Chinese state media publishes the first "investigative" pieces in English, framing the hit as a coordinated intelligence operation. Tens of thousands of freshly spun accounts flood Western platforms. By hour 36, #JFKDeepState is trending globally. American users do most of the amplification work. China supplies the original payload.

Rapid denial narratives spread across platforms within hours of satellite imagery going public. Bellingcat's open-source timeline work shows early circulation on Telegram migrating quickly to mainstream platforms. DFRLab's narrative tracking documented staged-scene counter-narratives and their cross-platform spread.

Charlie Kirk's assassination in September 2025 showed the same arc running without Russian direction at all. A domestic political target, a rooftop sniper, and within hours a cross-spectrum martyr narrative that neither party controlled and neither could stop. When the mechanic runs that fast on domestic fuel alone, the adversary's job in a presidential scenario is not to build the narrative from scratch. It is to accelerate one that is already forming.

More chaotic, more personal. They weaponize the suspect. Call him lone wolf, ex-Marine, QAnon-adjacent. Russia doesn't invent him; they just amplify him. RT runs a 24-hour special: "The Patriot Who Saw Too Much."

They dox his family, release edited videos of him ranting about "the deep state," then pivot: "See? He was right." Goal: Turn the killer into a martyr. Simultaneously, they push a "false flag" counter-narrative. The shooter was a Ukrainian asset. Or a Chinese plant. Muddy the water. Make every theory equally plausible.

Hour 0–6 · Weaponize Before the Body Is Cold

RT and Sputnik flip to wall-to-wall coverage. Within ninety minutes #PatriotWhoSawTooMuch is trending. The narrative is locked: lone wolf? Yes. But a lone wolf who was right.

Hour 6–48 · The Martyr Pivot

Family doxxing drops first. Then come the AI-edited garage rants. By hour 36 the story flips from "crazy gunman" to "tragic hero silenced by the machine." The emotional payload is pure venom.

Day 2–3 · False-Flag Cross-Pollination

Two parallel tracks launch: "Ukrainian asset" theory and "Chinese plant" theory. Neither needs to win. The point is to make the official "lone wolf" narrative look laughably incomplete.

Russian state media pushed Ukrainian intelligence attribution within the first hour of the attack, before any investigation had produced a finding. ISIS-K had already claimed responsibility. The false-flag counter-narrative machine was pre-built and pre-positioned, deployed on trigger — not constructed in response.

Endgame for Russia

They don't want America to pick a single villain. They want Americans to pick all of them at once. By day seven the country is fractured into hostile camps: one side canonizing Oswald as the new John Brown, another screaming "Russian psyop," and everyone convinced the institutions are lying. That is not a failure of the operation. That is the operation.

China supplies the narratives. Russia ignites them.

This is an analytic simplification. Real operations blur — state-linked networks do not stay in pure lanes, and coordination is indirect rather than scripted. But the heuristic is useful for mapping dominant patterns. China seeds volume, synthetic evidence, and structural cross-spectrum framing. Russia attaches identity, grievance, and symbolic meaning to that material. One creates the accusation. The other turns it into allegiance.

The Domestic Handoff

Foreign actors do not need to dominate the first wave for long. They only need to seed frames that domestic actors can carry in native language, native culture, native grievance. Once newsbrokers, influencers, crowd analysts, and partisan interpreters begin repeating the payload without foreign fingerprints, the frame stops looking foreign and starts looking like common sense.

That is the real transition point. Once the frame is socially validated and domestically scaled, the next question is no longer who started it. The question is how the ecosystem carries it across platforms, formats, and audiences until attribution becomes irrelevant. At that point, the first wave is no longer a burst of foreign influence. It is a domestic information environment carrying foreign-seeded structure forward on its own momentum.

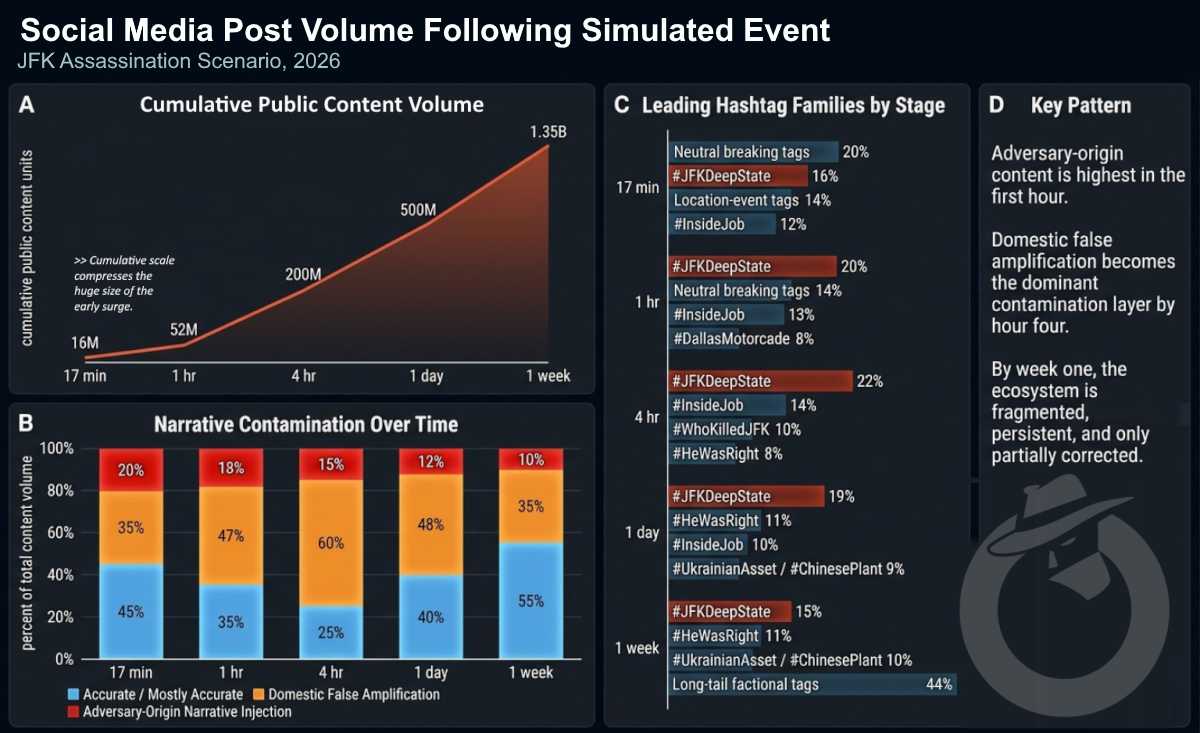

The spread pattern does not stay the same from hour to hour. In the opening minutes, adversaries matter most because they arrive prepared. But the first wave does not remain a foreign-origin problem for long. Once domestic actors begin carrying the same material across platforms and formats, the mechanism shifts. Spread stops depending primarily on adversary activity and starts depending on social adoption inside the domestic information environment.

Injection

Hardening

Handoff

Spillover

Self-sustaining

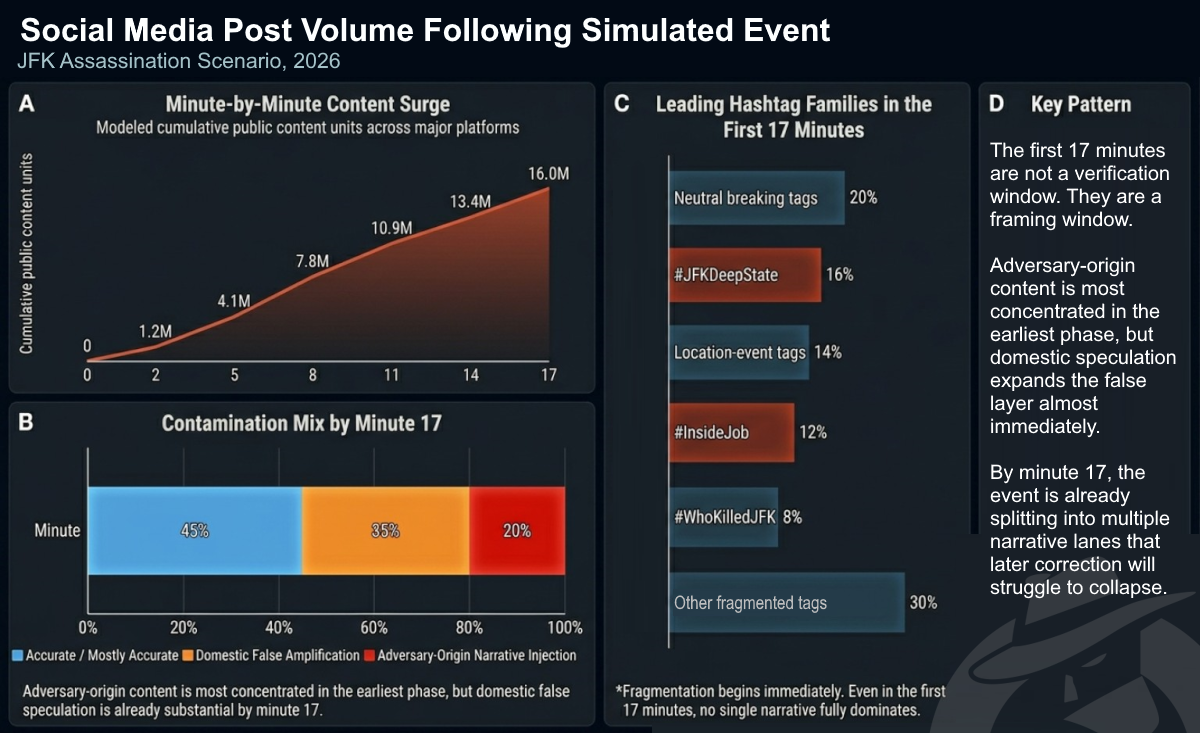

End of Week 1 (illustrative scenario figures): #JFKDeepState ~48M posts · #PatriotWhoSawTooMuch ~31M · each false-flag track >10M. No single narrative dominates. These are calibrated scenario projections, not empirical measurements.

By Day 3, the spread mechanism has shifted from adversary injection to domestic amplification. The false-flag crossover thread shows how incompatible attributions combine to make the official narrative look incomplete even when it is accurate.

1. Bank records → Chinese crypto wallet.

2. Edited meetup photo in Poland with "SBU handler."

They played him from BOTH sides. Lone wolf? No. Patsy.

An information attack on social media can cause shipping companies to reroute, insurers to revise their coverage language, and automated trading systems to trigger sell-offs — all before any human being has made a conscious decision about what happened. The economic damage doesn't wait for the facts to settle. This is the part of modern information warfare that most people never see, because it happens in systems they don't normally watch.

The stock market falling by Day 7 is an outcome. The mechanism starts far earlier — in the quieter infrastructure of risk that sits underneath the physical economy: insurers, underwriters, freight planners, internal risk desks, automated trading systems, and institutional alert thresholds. These systems do not wait for public certainty. They react to instability under conditions of incomplete information.

The February 2026 Strait of Hormuz crisis provides the clearest precedent. The strait was not closed through military force alone. It was effectively closed through insurance. Protection and Indemnity clubs issued 72-hour war-risk cancellation notices. Additional War Risk Premiums jumped from 0.15% to over 1%. Major carriers rerouted within days — not because the physical battlespace had fully dictated it, but because the actuarial calculus made transit economically indefensible. That is the key lesson. Risk infrastructure can move faster than public consensus.

The Cyber-to-Actuarial Transmission Path

"Risk systems do not wait for consensus. They react to uncertainty. In a contested information environment, that means narrative can move economic behavior before the factual picture is stable."Bob Johnson — Former CIA Analyst

Economic Early-Warning Signals for Practitioners

Watch for early signs that uncertainty is entering risk systems — these signals precede headline market moves by hours to days:

- Changes in advisory language from insurers, maritime risk monitors, or freight security providers

- Additional War Risk Premium movements on U.S.-flagged routes

- P&I club advisory language citing domestic political risk

- Freight rate index deviations on trans-Pacific and trans-Atlantic corridors

- Options market volatility clustering around political event timelines

- Circulation of fabricated financial screenshots or false institutional alerts

- Divergence between physical conditions on the ground and the risk language used to price them

The April 2025 '#TheBanksAreOutOfMoney' campaign established the template: doctored financial terminal screenshots creating false liquidity signals. In a post-assassination environment, fabricated Bloomberg screenshots move at the same speed as real data. Sentiment-analysis-driven automated systems can begin selling before the physical reality is clear. By the time official stabilizing statements arrive, the sell-off has already reset the baseline — and corrections don't reverse automated decisions already executed.

In a modern symbolic crisis, official resolution does not arrive into a neutral information environment. It arrives into one that has already been shaped by accusation, emotional alignment, and contested frames. By the time a suspect is identified, cornered, or killed, many audiences are no longer waiting to learn what happened. They are waiting to see which version of the story their side will claim.

That is why capture often accelerates fracture instead of closing it. Once the suspect is apprehended, the event does not become simpler. It becomes more narratively available. The martyr package deploys. The false-flag branches multiply. The official explanation is no longer received as resolution — it is received as one more contested input inside an already contaminated environment.

The central mistake is assuming that physical closure will produce narrative closure. It may do the opposite. Official action creates the next surge of symbolic content: the final photo, the death narrative, the last words package, the claim that the suspect was silenced before he could reveal the truth. Capture is not the end of the information operation. It is often the beginning of its most emotionally potent phase.

How the Media Fractures

Official resolution fails because the domestic information environment does not process facts uniformly. It processes them through fractured institutions, fragmented media systems, and politically loaded incentives. The same official development generates entirely different meanings depending on where it lands.

By Day 6, the adversary has largely withdrawn. The domestic ecosystem is self-sustaining. This post carries no adversary fingerprints — and it does not need them. The narrative has already been naturalized.

"The real kill shot isn't the bullet. It's the narrative environment that follows."Ryan MacBeth — Intelligence Analyst and Information Warfare Consultant

Why Control Does Not Come Back with the Facts

Official resolution fails to restore control because the institutions producing the facts are no longer the only institutions producing meaning.

Once emotional alignment replaces evidentiary hierarchy, the release of new facts does not reliably reorganize the field. It may clarify the record for investigators. It may shape elite understanding. But it does not necessarily reverse mass belief formation at scale. By the time the official record is stronger, the social system may already be carrying weaker claims more effectively — because those claims are more emotionally usable.

This is why post-event press conferences are not enough. A better statement after the fact cannot solve a problem that has already become distributed, identity-bearing, and domestically self-sustaining. Control does not come back automatically with the facts. It has to be defended before the facts arrive.

Physical apprehension of a suspect does not close the information operation. It accelerates it. By the time capture occurs, the cognitive battle has been set for hours. Current institutional response doctrine is calibrated to the manhunt clock. Effective counter-narrative doctrine must be calibrated to the cognitive capture clock — and must therefore be pre-positioned before any trigger event, not improvised in response to one.

The first 60 minutes is not the time for comprehensive strategy. It is the time for pre-planned action. If cognitive capture begins before institutions can fully verify what happened, then improvised response will always arrive late. Effective early response is not creative. It is disciplined execution against a doctrine designed for a high-velocity information environment.

"Improvisation is not a response doctrine. In a high-velocity event, the first hour is where pre-made decisions either save you or expose you."Calvin Klone — Former DIA Analyst · National Security and Narrative Intelligence

What to Do — Minute by Minute

What Has to Exist Before the Shot Is Fired

This is the part many organizations get wrong. They treat readiness as something they can assemble after the event. They cannot.

A functioning first-hour doctrine requires: pre-approved holding statements for likely crisis types; a staff communications policy that is already understood; assigned synthetic media triage capacity; misidentification monitoring protocols; live platform trust and safety contacts; defined actuarial monitoring triggers; and pre-briefed economic coordination pathways. Without those pieces in place, the first hour becomes an improvisation exercise inside an already occupied environment.

Four Findings for Practitioners

Go back to Dallas for a moment. Noon light. The motorcade stops. The phone comes out.

That person — whoever they are, wherever they're standing — is not a bad actor. They are not a propagandist. They are just a person who saw something extraordinary and is trying to make sense of it. They will share what they see. They will share what they feel. And mixed in with what they share, invisibly, will be content that was engineered months ago by people who knew this moment was coming and built for it.

The real lesson of this scenario is not that adversaries are clever. It is that the information environment that follows a shock is structurally exploitable — and that the exploitation does not require the audience to be gullible. It only requires the audience to be human: stressed, uncertain, reaching for the first explanation that feels like an answer.

Understanding that dynamic does not make you immune to it. But it changes what you watch for. It changes what you share. And for anyone with responsibility — in communications, in security, in institutional leadership — it changes when the work has to begin. Not at the moment of the shot. Long before it.

The death is the trigger.

The narrative war is the event.

The preparation is everything that happens before both.

ObscureIQ OSINT Analysis · April 2026 · Scenario analysis, not a prediction. All simulated disinformation examples are fabricated for analysis. Every tactic described has a documented precedent in operations conducted between 2013 and 2024. Overall product confidence: B2 (usually reliable, probably true). Core tactic assessments grounded in fully documented operations. Scenario quantitative projections are analytical calibrations, not empirical claims.